![]() Front Page

Front Page

RESEARCH TOPICS

![]() Automatic and Semi-automatic Essay Grading

Automatic and Semi-automatic Essay Grading

![]() Evaluation of the Natural Language Parsers

Evaluation of the Natural Language Parsers

![]() Plagiarism Detection in Software Projects

Plagiarism Detection in Software Projects

![]() Visualization of the Social Interaction in Virtual Environments

Visualization of the Social Interaction in Virtual Environments

WHO & WHAT

![]() People & Alumni

People & Alumni

![]() Publications

Publications

LINKS

![]() Educational Technology @ Department of Computer Science

Educational Technology @ Department of Computer Science

![]() Department of Computer Science in University of Joensuu

Department of Computer Science in University of Joensuu

FUNDERS

![]() National Technology Agency of Finland (TEKES)

National Technology Agency of Finland (TEKES)

![]() European Commission - Research Directorate

European Commission - Research Directorate

![]() Discendum

Discendum

![]() Karjalainen

Karjalainen

![]() Lingsoft

Lingsoft

![]() Tikka Communications

Tikka Communications

![]() Intranet (password required)

Intranet (password required)

Computer-assisted assessment refers to the use of computers in assessing students' learning outcomes. To reduce the costs of essay grading, methods to automate the assessment process have been developed. The need for computer-assisted assessment of learning outcomes is two-fold. Teachers need to automate the assessment and evaluation process

especially in mass courses. On the other hand, a student wants to get feedback and assess his or her own learning process before an examination. Evaluation is a broad concept which covers both formal and informal feedback, carried out either explicitly or implicitly.

especially in mass courses. On the other hand, a student wants to get feedback and assess his or her own learning process before an examination. Evaluation is a broad concept which covers both formal and informal feedback, carried out either explicitly or implicitly.

Our Java-based essay grading system for Finnish language, AEA (Automatic Essay Assessor), is based on Latent Semantic Analysis (LSA). LSA is a corpus-based statistical method, related to an information retrieval model called vector space model and some neural network models, which provides means to compare the semantic similarity between a source and target text. LSA can also be considered as a computational model of human knowledge representation. Research has showed that the method is able to simulate learning and several other psycholinguistic phenomena (See Figure 1).

Figure 1: The grading process.

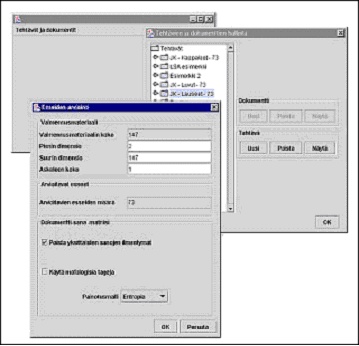

The system preprocesses the essays by a morphological parser of Finnish. It allows the user to adjust a set of parameters, for fine-tuning the accuracy. The grade is computed by using both essays, previously graded by humans, and an assignment-representative text from a textbook. All the information is saved into the MySQL database (See Figure 2).

Figure 2: The user interface of the essay grading.

For our experiments, 143 essays from an undergraduate course in education were collected. The essays were graded by a professor on a scale from zero to six. The grading model was constructed by using a relevant chapter from the course textbook and part of the essays . The essays and the course textbook were written in the Finnish language. Table 1 shows the results of our experiment.

Table 1: The results of the experiment.

In Table 1 the column labeled "training material" shows the structure of the training material used in the experiment. For example in the case shown in first row of the table, 5 sections from the textbook and 70 pre-graded essays were used creating the scoring model. The results of the experiment are shown with the proportion of cases where the same score was assigned by the system and human grader (exact) and the proportion of the essays where the grade given by the system was at most one point away (exact or adjacent). The last column of Table 1 shows the Spearman rank correlation between the scores given by the human grader and the system.

While automating the essay grading process is not a novel idea, it has been utilized only occasionally. Despite the impressive results, automated scoring systems are not widely accepted and used by educators. Given teachers' increasing work loads, the reasons for the successful teacher resistance against automatic essay grading must be searched for also in the pedagogical thinking. Although fully automated scoring can be feasible in some occasions, we hypothesized that a system supporting only such a simple form of evaluation is based on the outdated idea of behaviorism.

There is a need for utilizing computers not just for the grading, but for giving feedback and supporting the learner. Instead of grading a submitted essay in a black box, a semi-automatic essay evaluation environment would help a learner while he is authoring an essay by working together with him. It parses the language, compares it to available learning materials, analyzes the style, grammar, vocabulary, structure, and argumentation of the essay, identifies its key sentences, and detects potential plagiarism. The student is all the time aware of the evaluation process and can intervene to it. The semi-automatic approach means also that the system works as cognitive tool that helps the student to progress as an essay author (see Figure 3).

Figure 3: The structure of the semi-automatic essay grading system.

Currently, our aim is to develop the system further to make the semi-automatic essay assessing possible in order to support both the learners who are writing the essays and the teachers who are grading the essays. For this we have several ideas:

- Give verbal feedback about the essay.

- Give a summary of the essay.

- Highlight the most relevant and irrelevant sentences of the essay.

- Give information about the structure, coherence and cohesion of the essay.

- Search for terms that should coexist nearby, for example, in same sentence with each other.

- Give a possibility for self-evaluation of the essay.

- Detect plagiarism in the essays

Research Assistant Tuomo Kakkonen and

Research Assistant Niko Myller and

Research Assistant Jari Timonen, firstname.lastname@cs.joensuu.fi

(Master's Thesis of Tuomo Kakkonen, in Finnish)

Computer-assisted Essay Evaluation [Powerpoint-presentation]